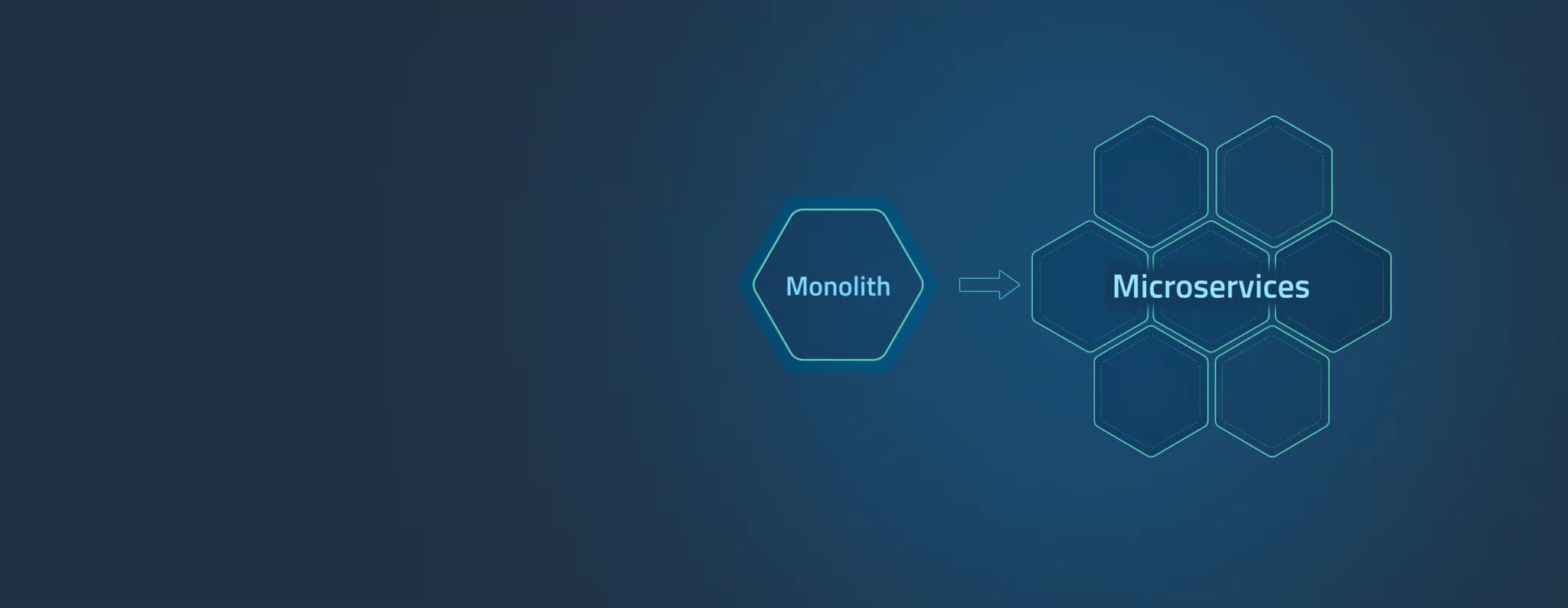

Migrating a Python Django DRF Monolith to Microservices - Part 2: Dockerizing the Microservices

Containerization is a crucial step in preparing your microservices for deployment. By using Docker, we can package each microservice with its dependencies, ensuring consistency across development, testing, and production environments. In this part, we will:

Write

Dockerfiles for each microservice.Use

docker-composefor local development.Optimize the Docker images with multi-stage builds.

Set up a shared network for microservices to communicate seamlessly.

By the end of this guide, your microservices will be containerized and ready for orchestration with Kubernetes in the next steps.

Step 1: Understanding Docker

1.1 What Is Docker?

Docker is a platform that allows you to package applications and their dependencies into lightweight containers. Containers run consistently regardless of the underlying environment.

Why Docker?

Ensures environment consistency.

Simplifies dependency management.

Makes scaling and deployment easier.

1.2 Key Docker Concepts

Dockerfile: Instructions to build a Docker image.

Image: A lightweight, standalone package of software.

Container: A runtime instance of an image.

Docker Compose: A tool to define and run multi-container applications.

Step 2: Writing Dockerfiles for Microservices

Each microservice will have its own Dockerfile. Let’s start with the User Service.

2.1 Dockerfile for the User Service

Base Image: Use a lightweight Python image for better performance.

FROM python:3.10-slimWorking Directory: Set the working directory inside the container.

WORKDIR /appDependencies: Install required Python packages from

requirements.txt.COPY requirements.txt . RUN pip install --no-cache-dir -r requirements.txtApplication Code: Copy the service code into the container.

COPY . .Command: Run the Django development server (or Gunicorn in production).

CMD ["gunicorn", "user_service.wsgi:application", "--bind", "0.0.0.0:8000"]

Final Dockerfile:

# Dockerfile for User Service

FROM python:3.10-slim

WORKDIR /app

COPY requirements.txt .

RUN pip install --no-cache-dir -r requirements.txt

COPY . .

CMD ["gunicorn", "user_service.wsgi:application", "--bind", "0.0.0.0:8000"]

2.2 Dockerfile for the Trading Service

Repeat the same process for the Trading Service:

# Dockerfile for Trading Service

FROM python:3.10-slim

WORKDIR /app

COPY requirements.txt .

RUN pip install --no-cache-dir -r requirements.txt

COPY . .

CMD ["gunicorn", "trading_service.wsgi:application", "--bind", "0.0.0.0:8000"]

2.3 Optimize Dockerfiles with Multi-Stage Builds

Multi-stage builds help reduce the size of the final Docker image by separating the build and runtime environments.

Example for User Service:

# Multi-stage Dockerfile

FROM python:3.10-slim AS builder

WORKDIR /app

COPY requirements.txt .

RUN pip install --no-cache-dir -r requirements.txt

FROM python:3.10-slim

WORKDIR /app

COPY --from=builder /app /app

COPY . .

CMD ["gunicorn", "user_service.wsgi:application", "--bind", "0.0.0.0:8000"]

Step 3: Using Docker Compose for Local Development

To simplify running multiple services locally, we use Docker Compose.

3.1 Create a docker-compose.yml File

The docker-compose.yml file defines the configuration for all microservices, including networking and volumes.

Example for User and Trading Services:

version: '3.8'

services:

user_service:

build:

context: ./user_service

ports:

- "8001:8000"

environment:

- DATABASE_URL=postgres://user:password@db:5432/user_service_db

depends_on:

- db

trading_service:

build:

context: ./trading_service

ports:

- "8002:8000"

environment:

- DATABASE_URL=postgres://user:password@db:5432/trading_service_db

depends_on:

- db

db:

image: postgres

environment:

POSTGRES_USER: user

POSTGRES_PASSWORD: password

POSTGRES_DB: user_service_db

ports:

- "5432:5432"

3.2 Running the Services

Start the services:

docker-compose up --buildAccess the User Service:

- Open your browser or Postman:

http://localhost:8001/api/users/.

- Open your browser or Postman:

Step 4: Testing Dockerized Services

Validate Containers:

Check running containers:

docker ps

Test APIs:

Use

curlor Postman to test the endpoints:curl -X GET http://localhost:8001/api/users/ curl -X POST http://localhost:8002/api/trades/

Inspect Logs:

View container logs for debugging:

docker logs user_service

Step 5: Best Practices for Dockerization

Keep Images Small:

Use multi-stage builds.

Avoid installing unnecessary packages.

Environment Variables:

- Store sensitive data in environment variables, not hardcoded in Dockerfiles.

Health Checks:

Add health checks in

docker-compose.ymlto ensure services are running:healthcheck: test: ["CMD", "curl", "-f", "http://localhost:8000/health"] interval: 30s timeout: 10s retries: 3

Shared Volumes:

Use volumes for sharing data between services or persisting database data:

volumes: - db_data:/var/lib/postgresql/data

Conclusion

At the end of Part 2, your microservices are containerized using Docker, and you have a working local setup using Docker Compose. You’ve learned how to:

Write efficient Dockerfiles.

Use Docker Compose to manage multiple containers.

Optimize images for production.

Next Steps: In Part 3, we will deploy these Dockerized microservices to a Kubernetes cluster, setting up production-ready orchestration and scaling.

Happy Deployment!